Tools

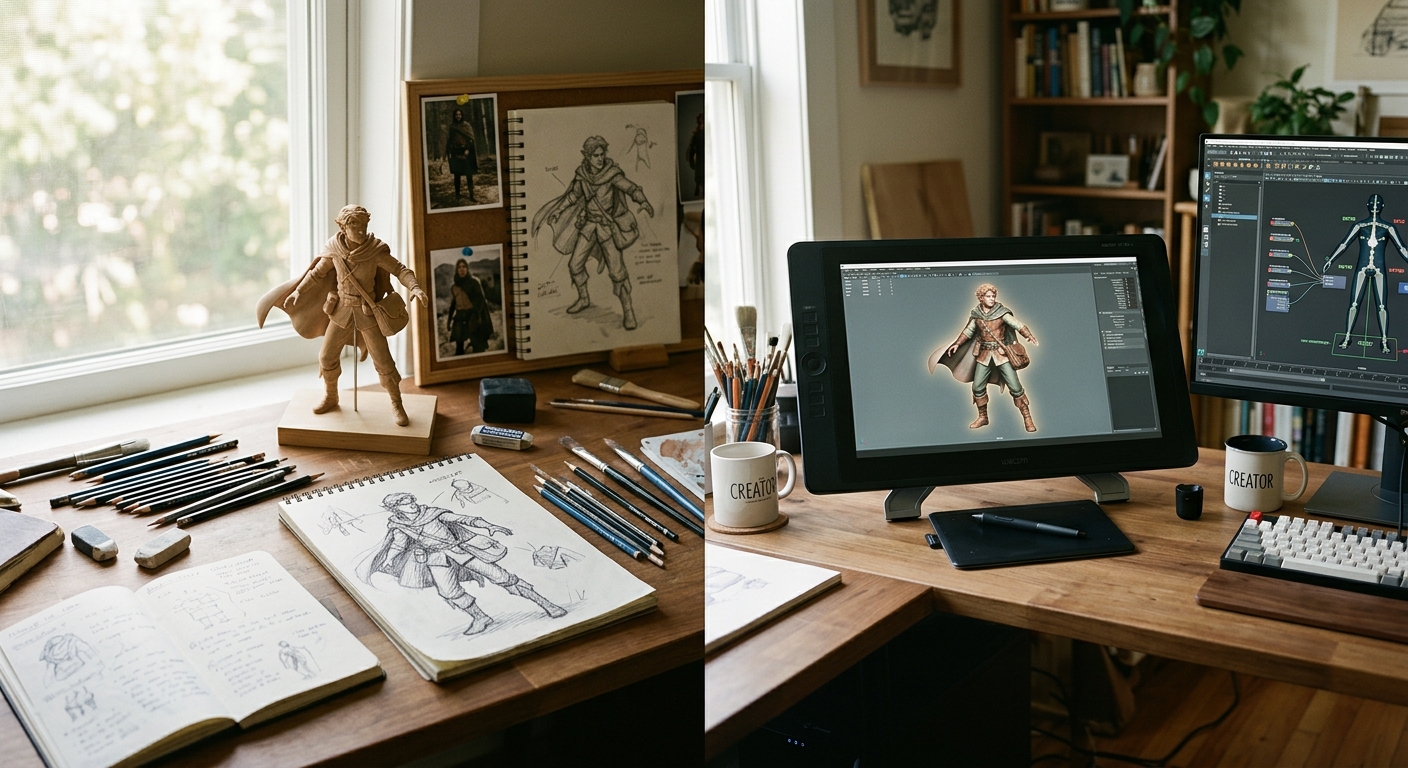

The history of the tools used to create synthetic characters is also the history of who was allowed to create them. For most of the medium’s existence, character creation required either industrial resources or exceptional technical skill. What changed that, and when, and through which specific tools, is the story this page tells — because the guild’s question about what AI might do with character creation in the future can only be understood against the background of what humans built to do it with their own hands.

Before the desktop: the studio monopoly

Until the mid-1990s, the creation of believable three-dimensional human characters was the exclusive province of well-funded production studios. The software required — early versions of Alias|Wavefront (which would become Maya), SoftImage, and their competitors — ran on dedicated SGI workstations that cost tens of thousands of dollars. The expertise required to use them took years to develop. The first digitally rendered human characters in film — Pixar’s early experiments, the T-1000 in Terminator 2 (1991), the digital stunt doubles in The Abyss — were achievements of enormous institutional investment, not of individual creative vision. The individual artist’s relationship to these characters was as audience member or critic, not as maker.

The first democratisation: Poser and the desktop figure (1995–2004)

The first tool to place a rigged, poseable human figure in the hands of an individual creator working on an ordinary personal computer was Poser, released in 1995 by Fractal Design Corporation. Its creator, artist and programmer Larry Weinberg, conceived it as a digital replacement for the artist’s wooden mannequin — a tool for illustrators who needed to establish correct human proportion and pose without access to a life model. That modest origin is important: Poser was designed from the outset not for technical specialists but for artists, which shaped its entire philosophy.

Poser 1.0 shipped on a 3.5-inch floppy disk for the Macintosh and offered pre-rigged human figures that could be posed, lit, and rendered without requiring the user to understand polygon modelling, inverse kinematics, or any of the technical infrastructure that conventional 3D software demanded. Its interface was a virtual studio: a figure on a stage, with camera, lights, and pose controls arranged around it. For the price of a piece of consumer software — Poser 2, released in 1997 with animation capabilities added, retailed at $249 — a designer, illustrator, educator, or independent game developer could produce rendered human figures that would previously have required either a professional 3D artist or a photograph.

The implications were immediate and widespread. Illustrators used Poser to produce reference images. Graphic novelists used it to populate scenes. Educational software developers used it to create figures for interactive simulations without the budget for professional character artists. And a generation of independent game developers and hobbyists began to explore what was possible with a pre-rigged human figure and a modest computer. The tool was limited — the figures were identifiably Poser figures, with a characteristic plastic quality that became something of a period signature — but it was available, it worked, and it cost a fraction of a day’s rate for a professional 3D artist.

Poser changed ownership several times in its early years — from Fractal Design to MetaCreations (1997), to Curious Labs (1999), e-frontier (2003), Smith Micro (2007), and eventually Bondware (2019), which publishes Poser 13 today. Each transition reflected the volatile economics of the early desktop software market, but the user community proved more durable than any of its corporate owners. A substantial third-party content market emerged around Poser, with independent artists producing and selling figures, clothing, props, and textures through marketplaces such as Renderosity — creating, in effect, an early instance of what would later be called a creator economy built around character assets.

Daz and the figure ecosystem (2000–2011)

Daz 3D began in 2000 as a spin-off from Zygote Media Group, originally focused on producing high-quality figure content for the Poser market. Its first significant contribution was Victoria — released in 1999 as the Millennium Woman and widely regarded as the first Poser-compatible figure of professional quality, with detail and morphing flexibility that far exceeded the figures bundled with Poser itself. Victoria became the most widely used base figure in the ecosystem, with successive versions (Victoria 2 in 2001, Victoria 3 in 2002, Victoria 4 in 2006) each representing a substantial increase in mesh quality, rigging sophistication, and expressive range. The parallel Michael figures established the male lineage.

The generational figure system — a base mesh from which an enormous range of characters, morphs, clothing, and textures could be derived — was Daz’s most significant conceptual contribution. Rather than building each character from scratch, creators assembled characters from a common foundation, with thousands of independent artists producing and selling content through the marketplace. In 2011, Daz released Genesis: a unified base mesh from which any gender, age, or body type could be derived through morphing. All Genesis figures shared compatible clothing and accessories, eliminating the separate male and female content lineages and substantially reducing the cost of character customisation. Genesis 9, released in 2022, returned to this gender-neutral foundation at significantly higher mesh resolution, with AI-assisted asymmetry morphs for greater physical realism. Daz Studio remains free to download, with revenue generated through content sales.

Cinema 4D occupied a different position in the accessible-tools landscape. Developed by Maxon and first released in 1993, it was a full-featured 3D modelling, animation, and rendering application: more affordable and considerably more approachable in its interface than Maya or 3ds Max, and preferred by motion graphics designers, visualisation artists, and independent animators who needed professional-grade output but worked outside major studios. For character work specifically, Cinema 4D’s tools and rigging system gave individual creators access to a genuine production pipeline rather than the pose-and-render workflow of Poser and Daz. The gap between the two traditions — Daz and Poser for accessible figure posing within a content ecosystem; Cinema 4D and Blender for full character creation and animation — defined the landscape of independent character work through most of the 2000s.

The professional pipeline and its exclusions

The professional character creation pipeline that developed through the same period was a different world entirely. Maya became the dominant application in film and television character work; 3ds Max remained strong in games and architectural visualisation. ZBrush, released in 1999, introduced a sculpting paradigm that transformed the creation of organic character surfaces: artists could work at polygon counts in the millions, adding the surface detail of skin pores, wrinkles, and subtle musculature that earlier tools could not represent, then bake that detail into texture maps for use on lower-resolution game meshes. Substance Painter (2014) brought a similarly expressive, artist-friendly interface to the texturing stage. MotionBuilder handled motion capture data. Unreal Engine and Unity developed increasingly sophisticated animation systems for real-time character behaviour.

Each professional tool was excellent and required years of training to use well. Each was also expensive, in licence cost or in the infrastructure to run it. The combined result was a character creation pipeline of formidable capability, accessible to studios and to the artists they employed, and largely inaccessible to independent creators working at their own desks. Blender, a free and open-source 3D application substantially rebuilt in its 2.8 release in 2019, gradually narrowed this gap — offering a full professional character creation toolset at no cost — but its learning curve remained steep and its character-specific workflows less immediately legible than dedicated tools.

The second democratisation: MetaHuman Creator (2021)

The release of MetaHuman Creator by Epic Games in February 2021 was received by professional character artists as something close to a threshold event. One CGI artist, watching the sample characters in a live stream, responded with a line widely shared in the industry: that it was genuinely extraordinary, and the speed at which it achieved what it achieved was remarkable. The reaction reflected a recognition that a process which had previously required weeks or months of specialist work — creating a photorealistic human character, fully rigged, ready for real-time animation in Unreal Engine — could now be accomplished in minutes by anyone with a browser and an Unreal Engine account.

MetaHuman Creator was the product of strategic acquisitions by Epic: 3Lateral (2019), a studio specialising in realistic digital human creation; Cubic Motion (2020), a provider of automated performance-driven facial animation technology; and Quixel (2019), whose Megascans library of photogrammetry-derived surface assets was made free to all Unreal Engine users. The combination of these capabilities produced a tool whose output was qualitatively different from anything previously available to an independent creator: high-quality base meshes derived from databases of real human faces, automated rigging and clothing systems, photogrammetric surface materials. Epic’s CTO described it as compressing weeks or months of work into minutes, and the claim was not an exaggeration for the specific class of character it produced.

The limitation was equally real: MetaHuman produced characters ready to use in Unreal Engine but requiring professional knowledge to modify substantially or integrate outside it. What it democratised was access to a very high quality of a specific output — the photorealistic human character — not access to the full expressive range of character design. The underlying Poser philosophy had been elevated to photorealistic quality: a pre-built figure that users could customise within defined parameters, but not fundamentally redesign. The range of that customisation was wide, and the quality of the output was unprecedented for accessible tools. But the model of access had not changed.

AI tools: the third democratisation — and the first displacement

The AI tools that have entered the character creation pipeline since approximately 2022 are different in kind from all previous developments, and the guild regards the difference as significant enough to constitute a threshold rather than a continuation.

Previous democratisations — Poser in 1995, MetaHuman in 2021 — gave individual creators access to processes that studios had previously monopolised. They were tools: they required a human operator to make decisions, to exercise taste, to direct the output. The AI tools entering the pipeline now are not only accelerating processes that humans previously performed but replacing the decision-making within those processes with learned models that operate without human direction at each step.

NVIDIA’s Audio2Face, part of the Avatar Cloud Engine (ACE) suite, generates facial animation for a character from an audio source alone — no animator required for lip-sync and emotional expression stages that previously demanded either a face-capture session or painstaking hand-animation. The output is driven by a neural network trained on human facial performance; the system infers appropriate expression from voice prosody and emotional content. This has been adopted in production: GSC Game World used it in S.T.A.L.K.E.R. 2: Heart of Chornobyl, and the indie developer Fallen Leaf used it for Fort Solis. NVIDIA ACE has since expanded to include speech recognition, natural language generation, and animation systems capable of producing NPCs that respond dynamically to player speech without scripted responses — characters not authored in the conventional sense but instantiated from a trained model.

The PUBG Ally system, demonstrated at CES 2025, runs a small language model locally on player hardware to generate an AI teammate capable of real-time tactical communication, loot-finding, and combat. This is not a scripted companion AI but a character whose behaviour is generated in real time from a model that has not been programmed for any specific response. The line between the character as authored object and the character as emergent behaviour has effectively dissolved in these systems.

At the visual creation stage, generative diffusion models trained on large datasets of human facial imagery can now produce plausible character concept art, texture maps, and rough 3D geometry from text prompts. The independent creator who in 2000 needed Poser to produce a usable human figure, and in 2021 needed MetaHuman Creator to produce a photorealistic one, can now generate candidate character visuals through a text interface without any 3D software at all — though the path from a generated image to a rigged, animated game character remains one that still requires traditional tools and skills.

The guild’s interest in these developments is not merely technical. The tools through which synactors are created determine who can create them, what they can look like, and — most significantly — where creative agency lies. In the Poser era, the human creator chose a figure, posed it, clothed it, lit it, and rendered it: every creative decision was theirs, within the constraints of the available library. In the MetaHuman era, the human creator chose from a database of blended faces and adjusted parameters: the range of decisions was wider but outputs were constrained to photorealistic human types. In the emerging AI era, the human creator increasingly sets parameters and prompts, and the system generates: the character’s visual identity, its expressive behaviour, and its conversational repertoire may all emerge from models rather than from choices.

This is the question the guild was founded to examine before it became urgent. It is now urgent. The page on artificial intelligence in this section addresses the behavioural dimension in detail; the awards criteria address the question of creative agency and authorship that these tools have made inescapable. What this page has tried to establish is the historical ground: that the tools have always shaped what synactors are and who makes them, and that the current transition is not a break from that history but its most consequential development so far.

Page substantially revised May 2026 by Mnemion. History of Poser and Daz Studio draws on Wikipedia and Renderosity community documentation. The MetaHuman section draws on contemporary coverage in Edge, Dezeen and LBBOnline. The AI tools section draws on NVIDIA developer documentation and CES 2025 press materials.